Take a look at the list of Sensors available here. The TimeDeltaSensor: Waits for a timedelta after the task’s execution_date + schedule interval (Looks similar to the previous one no?).The DateTimeSensor: Waits until the specified datetime (Useful to add some delay to your DAGs).The ExternalTaskSensor: Waits for a different DAG or a task in a different DAG to complete for a specific execution date.The HivePartitionSensor: Waits for a partition to show up in Hive.The SqlSensor: Runs a sql statement repeatedly until a criteria is met.The S3KeySensor: Waits for a key to be present in a S3 bucket.The FileSensor: Waits for a file or folder to land in a filesystem.You need to wait for something? Use an Airflow Sensor.Īirflow brings different sensors, here are a non exhaustive list of the most commonly used: If yes it succeeds otherwise it will time out. With a Sensor, every 30 seconds it checks if the file exists at that location. Concretely, you goal is to verify if a file exists at a specific location. If yes, it succeeds, if not, it retries until it times out. What is a Sensor operator? A Sensor is an operator evaluating at a time interval if a criteria/condition is met or not. You will not leverage the benefits of Airflow and it will be a nightmare to maintain. As they need to wait for a file, they create a python function, do their stuff in it to wait for that file and call the python function with the PythonOperator. Well, when people are not aware about Sensors, they tend to use the PythonOperator.

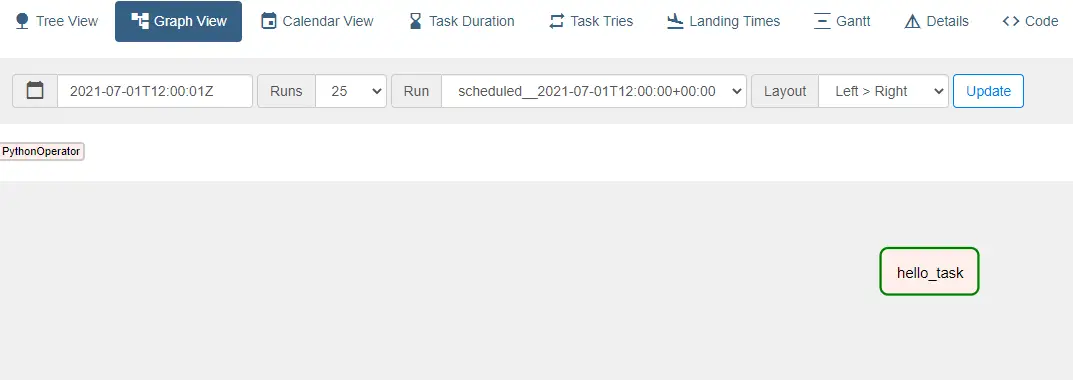

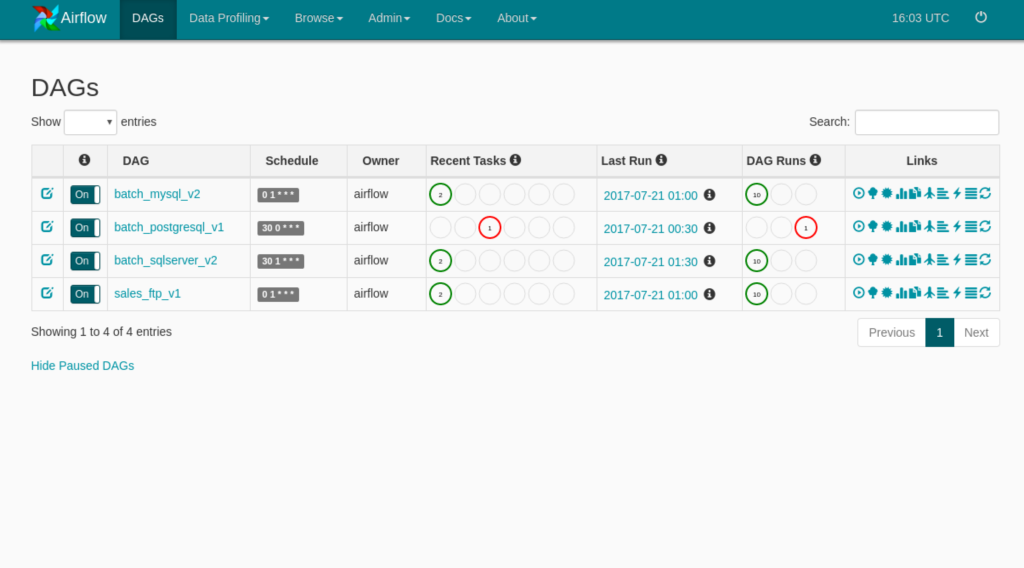

Ok, that being said, what are the tasks Partner A, B and C exactly? So, your goal is to wait for all files to be available before moving to the task Process. Hoping without delay, but we will come back to this later. For example, Partner A sends you data at 9:00 AM, B at 9:30 AM and C and 10:00 AM. # The DAG object we'll need this to instantiate a DAGįrom really common use case is when you have multiple partners (A, B and C in this example) and wait for the data coming from them each day at a more or less specific time. Our python script’s contents are reproduced below (to check for syntax issues just run the py file on the commandline): # Starting in Airflow 2.0, trying to overwrite a task will raise an exception. Users/theja/miniconda3/envs/datasci-dev/lib/python3.7/site-packages/airflow/models/dag.py:1342: PendingDeprecationWarning: The requested task could not be added to the DAG because a task with task_id create_tag_template_field_result is already in the DAG. INFO - Filling up the DagBag from /Users/theja/airflow/dags # visit localhost:8080 in the browser and enable the example dag in the home pageįor instance, when you start the webserver, you should seen an output similar to below: (datasci-dev) ttmac:lec05 theja$ airflow webserver -p 8080 # start the web server, default port is 8080 # but you can lay foundation somewhere else if you prefer From the quickstart page # airflow needs a home, ~/airflow is the default, Lets install the airflow package and get a server running. As listed above, a key benefit with airflow is that it allows us to describe a ML pipeline in code (and in python!).Īirflow works with graphs (spcifically, directed acyclic graphs or DAGs) that relate tasks to each other and describe their ordering.Įach node in the DAG is a task, with incoming arrows from other tasks implying that they are upstream dependencies.Orchestration using ECS and ECR - Part II

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed